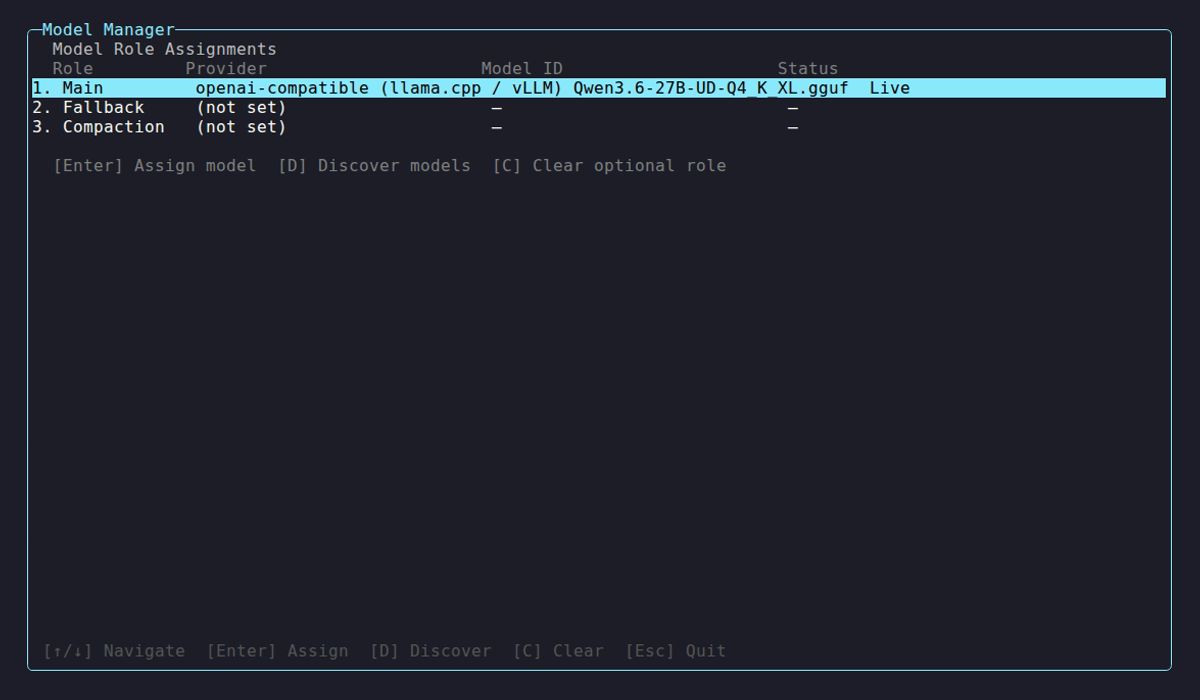

Models

Netclaw assigns LLMs to three roles in the Models section of ~/.netclaw/config/netclaw.json: Main, Fallback, and Compaction. Only Main is required. The other two route to Main when unset.

Before assigning models, you need at least one provider configured. See Managed Providers or Self-Hosted Providers.

For CLI commands that manage models interactively, see netclaw model.

Model roles

Section titled “Model roles”| Role | Purpose | Required? |

|---|---|---|

| Main | Primary model for all interactions | Yes — defaults to qwen3:30b on local-ollama |

| Fallback | Automatic failover when Main is unavailable | No — routes to Main when unset |

| Compaction | Cheaper/faster model for context summarization | No — routes to Main when unset |

Configuration schema

Section titled “Configuration schema”Each role is an object under Models with these fields:

| Field | Type | Default | Description |

|---|---|---|---|

Provider | string | "local-ollama" | Key into the Providers dictionary |

ModelId | string | "qwen3:30b" | Model identifier as used by the provider API |

ContextWindow | int? | null | Clamps the runtime context window in tokens; takes precedence over provider-reported value |

Provenance | enum? | null | Read-only. Set by the CLI: "Live" (discovered from provider), "Defaults" (curated defaults), or "Manual" (model set) |

InputModalities | flags enum? | null | Override input modalities, e.g. "Text, Image". Values: Text, Image, Audio, Video |

OutputModalities | flags enum? | null | Override output modalities (same values, e.g. "Text") |

The schema enforces additionalProperties: false, so only Main, Fallback, and Compaction are valid role names.

Minimal config

Section titled “Minimal config”With Ollama running locally and qwen3:30b pulled, this is all you need. The Provider value must match a key you’ve defined under Providers in the same config file.

{ "Models": { "Main": { "Provider": "local-ollama", "ModelId": "qwen3:30b" } }}Context window is auto-detected from Ollama.

Production config

Section titled “Production config”Here’s a more realistic setup: 30B for Main, 8B for Fallback (resilience if the big model goes down), same 8B for Compaction (summarization doesn’t need a big model):

{ "Models": { "Main": { "Provider": "remote-gpu", "ModelId": "qwen3:30b", "ContextWindow": 32768 }, "Fallback": { "Provider": "remote-gpu", "ModelId": "qwen3:8b", "ContextWindow": 32768 }, "Compaction": { "Provider": "remote-gpu", "ModelId": "qwen3:8b" } }}Context window resolution

Section titled “Context window resolution”Config takes precedence over anything the provider reports:

ContextWindowvalue in config (highest priority)- Provider-detected value (via

/api/show,/v1/models, etc.) - Default: 32,768 tokens

Any role with an explicit ContextWindow must set it to at least 4,096 tokens. If Main’s ContextWindow exceeds what the provider reports, the daemon refuses to start and tells you why.

Capability detection

Section titled “Capability detection”Netclaw auto-detects what a model supports (context window, modalities) by walking this list until something answers:

- Built-in static catalog (covers well-known models with zero network cost)

- Ollama

/api/show— only when the provider type isollama - OpenAI-compatible

/v1/modelsmetadata — only when the provider type isopenai-compatible - OpenRouter public catalog

- HuggingFace capability resolver

- Text-only defaults (32,768 token context window)

If your provider misreports capabilities (say, an Ollama model supports vision but detection shows text-only), set InputModalities or OutputModalities in config to override detection.

Failover

Section titled “Failover”When Fallback is configured, netclaw wraps both models in a failover layer. If Main throws after exhausting retries, the request goes to Fallback automatically.

Retries happen first: 3 attempts with exponential backoff (1s base, 30s max, ±25% jitter). Retried errors: network failures, HTTP 408/429/5xx, TaskCanceledException, TimeoutException. Only after all retries fail does failover kick in.

There’s a catch with streaming. Failover only applies if Main fails before the first chunk reaches the caller. Once a chunk has been emitted, failures propagate directly. Splicing two model responses together mid-stream would produce garbage, so netclaw doesn’t try.

| Event | Alert Level |

|---|---|

| Main fails, Fallback takes over | provider.failover — Warning |

| Both Main and Fallback fail | provider.unreachable — Critical |

If Fallback is not configured, failed retries on Main surface the error directly.

Compaction

Section titled “Compaction”Compaction is for background LLM work: summarizing conversation context when it grows too long, generating session titles, extracting memories. These don’t need your best model. An 8B handles them fine and saves compute for actual conversations.

Compaction fires when context reaches 75% of the context window. You can tune this with Session.CompactionThreshold.

Environment variable overrides

Section titled “Environment variable overrides”You can override any model field with NETCLAW_ environment variables. Double underscores separate path segments, following the .NET configuration convention:

export NETCLAW_Models__Main__Provider="openrouter"export NETCLAW_Models__Main__ModelId="anthropic/claude-sonnet-4"export NETCLAW_Models__Main__ContextWindow="200000"These take highest priority, overriding anything in netclaw.json. On Linux, variable names are case-sensitive.

Validation errors

Section titled “Validation errors”| Condition | Result |

|---|---|

Main Provider or ModelId is empty | Startup fails |

ContextWindow < 4,096 on any role | Startup fails |

Main ContextWindow exceeds provider-reported value | Startup fails with descriptive error |

Unknown role name in Models | Config schema rejects it |

Provider key doesn’t exist in Providers | netclaw model set rejects it; lists configured providers |

Applying changes

Section titled “Applying changes”All model config changes require a daemon restart:

netclaw daemon restartVerify models are picked up:

netclaw model list # reads from confignetclaw status # shows what the running daemon is usingTypical setup sequence

Section titled “Typical setup sequence”- Configure a provider (Managed Providers or Self-Hosted Providers)

- Assign models to roles (this page, or

netclaw model set) - Restart the daemon

- Verify with

netclaw status

Related pages

Section titled “Related pages”netclaw model— CLI reference for model management (TUI,set,discover,list,clear)- Managed Providers — configure cloud providers (OpenRouter, Anthropic, OpenAI)

- Self-Hosted Providers — configure Ollama, llama.cpp, vLLM

- Secrets Management — how credentials are encrypted and stored

Resources

Section titled “Resources”- Ollama model library — browse models for local inference

- Ollama modelfile parameters — context window and other model-level settings

- OpenRouter model catalog — compare models across providers with pricing

- .NET environment variable configuration — the double-underscore nesting convention